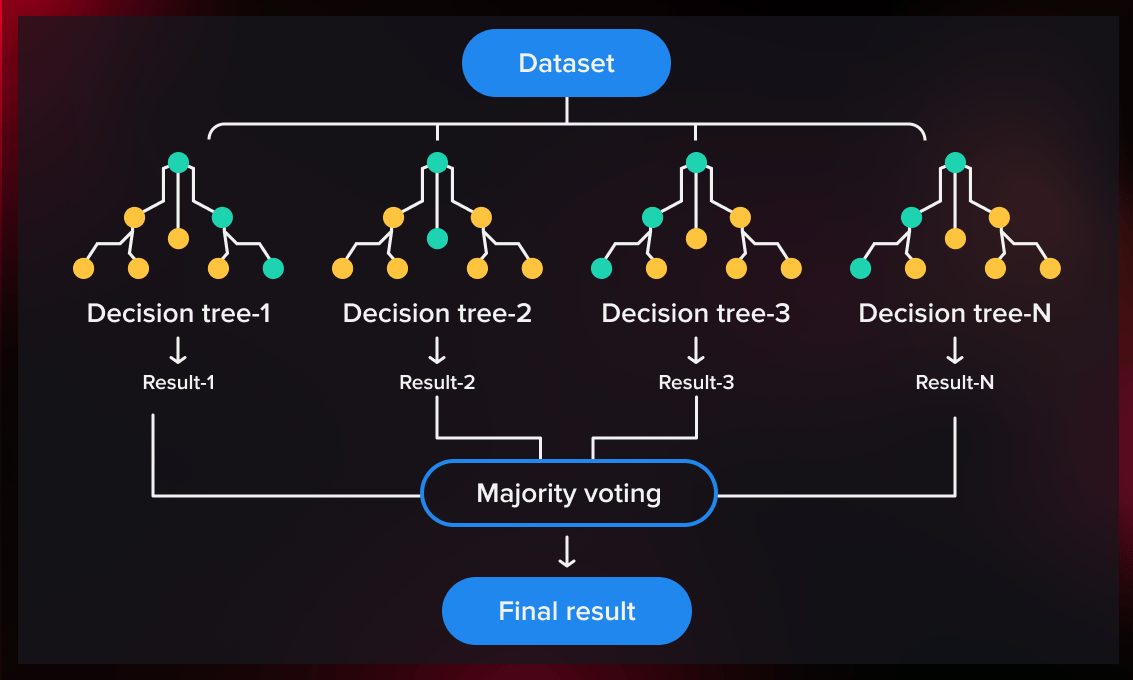

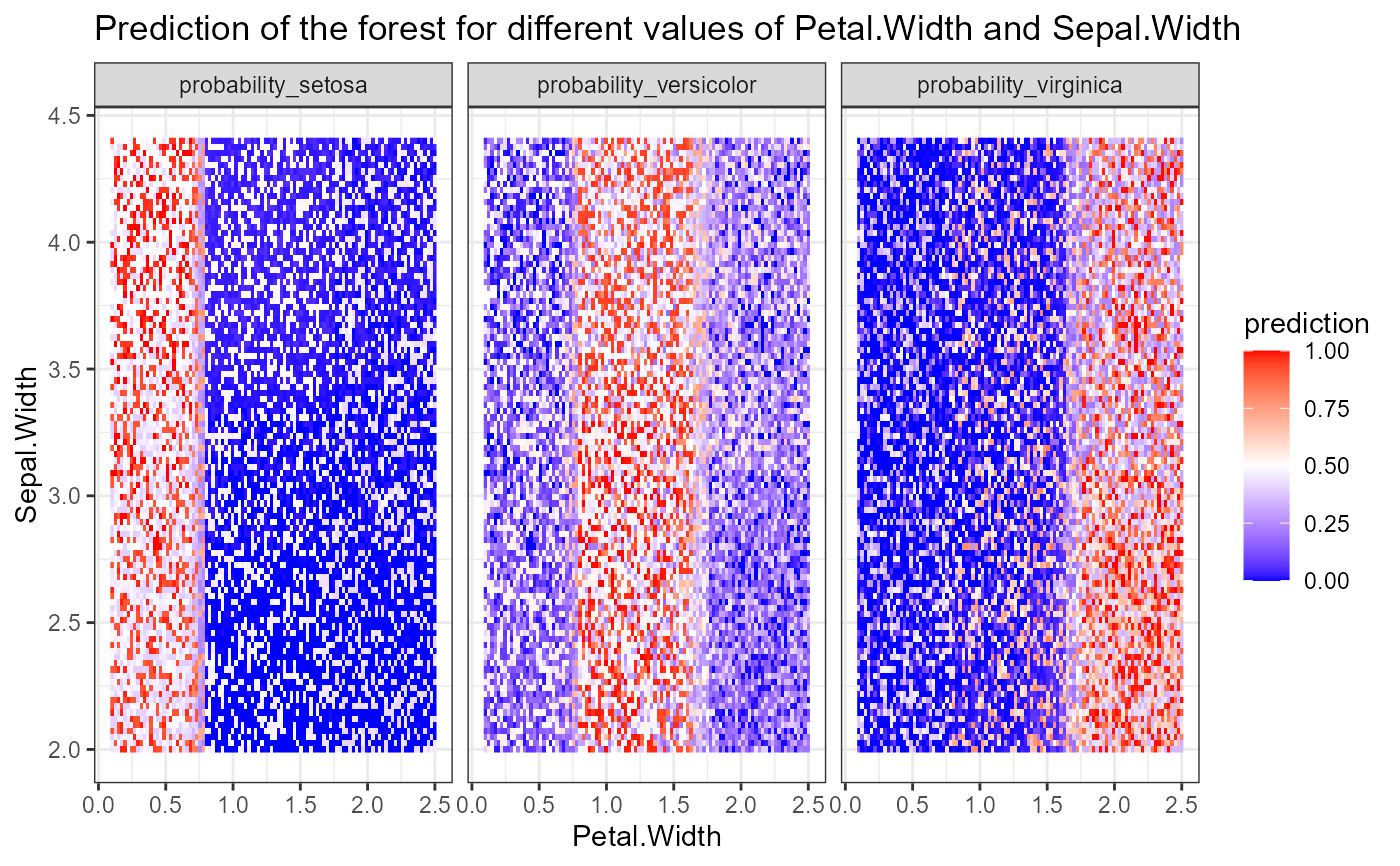

A significant contribution of this study is the ability to assess different variable selection techniques in the setting of random forest classification in order to identify preferable methods based on applications in expert and intelligent systems.Ĭlassification feature reduction random forest variable selection. (b) Grow a random-forest tree T b to the bootstrapped data, by re-cursively repeating the following steps for each terminal node of the tree, until the minimum node size n min. For b 1toB: (a) Draw a bootstrap sample Z of size N from the training data. The random forest method is a commonly used tool for classification with high-dimensional data that is able to rank candidate predictors through its inbuilt. It can also be used for regression model (i.e. Random Forests Algorithm 15.1 Random Forest for Regression or Classication. For datasets with many predictors, the methods implemented in the R packages varSelRF and Boruta are preferable due to computational efficiency. Random Forest is one of the most widely used machine learning algorithm for classification. In healthcare, Random Forest can be used to analyze a patient’s medical history to identify diseases. When using random forests to predict a quantitative response, an important but often overlooked challenge is the determination of prediction intervals that will contain an unobserved response value with a specified probability. Based on our study, the best variable selection methods for most datasets are Jiang's method and the method implemented in the VSURF R package. Random forests are among the most popular machine learning techniques for prediction problems. We compare random forest variable selection methods for different types of datasets (datasets with binary outcomes, datasets with many predictors, and datasets with imbalanced outcomes) and for different types of methods (standard random forest versus conditional random forest methods and test based versus performance based methods). Using 311 classification datasets freely available online, we evaluate the prediction error rates, number of variables, computation times and area under the receiver operating curve for many random forest variable selection methods. Several variable selection methods exist for the setting of random forest classification however, there is a paucity of literature to guide users as to which method may be preferable for different types of datasets. Often in prediction modeling, a goal is to reduce the number of variables needed to obtain a prediction in order to reduce the burden of data collection and improve efficiency. Is Anesthesia Technique Associated With a Higher. score yourself as this is extremely trivial function print np.mean(clf.Random forest classification is a popular machine learning method for developing prediction models in many research settings. Predicting risk for adverse health events using random forest. The final predictions of the random forest are made by averaging the predictions of each individual tree.

Oob_score=False, random_state=None, verbose=0, Random forest models are widely used in many application domains due to their performance and the fact that their constituent decision trees carry clear. The random forest combines hundreds or thousands of decision trees, trains each one on a slightly different set of the observations, splitting nodes in each tree considering a limited number of the features. This will predict the low and high values of the next trading days, which includes the future prices for the next five days, one month. Min_weight_fraction_leaf=0.0, n_estimators=10, n_jobs=1, The random forest regression model is used for prediction.

Max_depth=None, max_features='auto', max_leaf_nodes=None, The authors 8 investigated about supervised machine learning algorithms Naive Bayes, Random Forest and Decision Tree algorithm for prediction of anemia. The usual approach is to assign that example to the. So, let’s say RF output for a given example is 0.60. Advantages and Disadvantages of Random Forest It reduces overfitting in decision trees and helps to improve the accuracy It is flexible to both classification. Confidence intervals will provide you with a possible ‘margin of error’ of the output probability class.

RandomForestClassifier(bootstrap=True, class_weight=None, criterion='gini', Since Random Forest (RF) outputs an estimation of the class probability, it is possible to calculate confidence intervals. In particular you can use LabelEncoder > from sklearn.ensemble import RandomForestClassifier as RF Simply encode labels as integers and everything will work well.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed